Training and Deploying RL for a $500 Sidewalk Robot

1. Intro: how did the project start?

I knew almost nothing about robotics, and that’s exactly why I started. After many years in AI and software engineering, I wanted to build something physical.

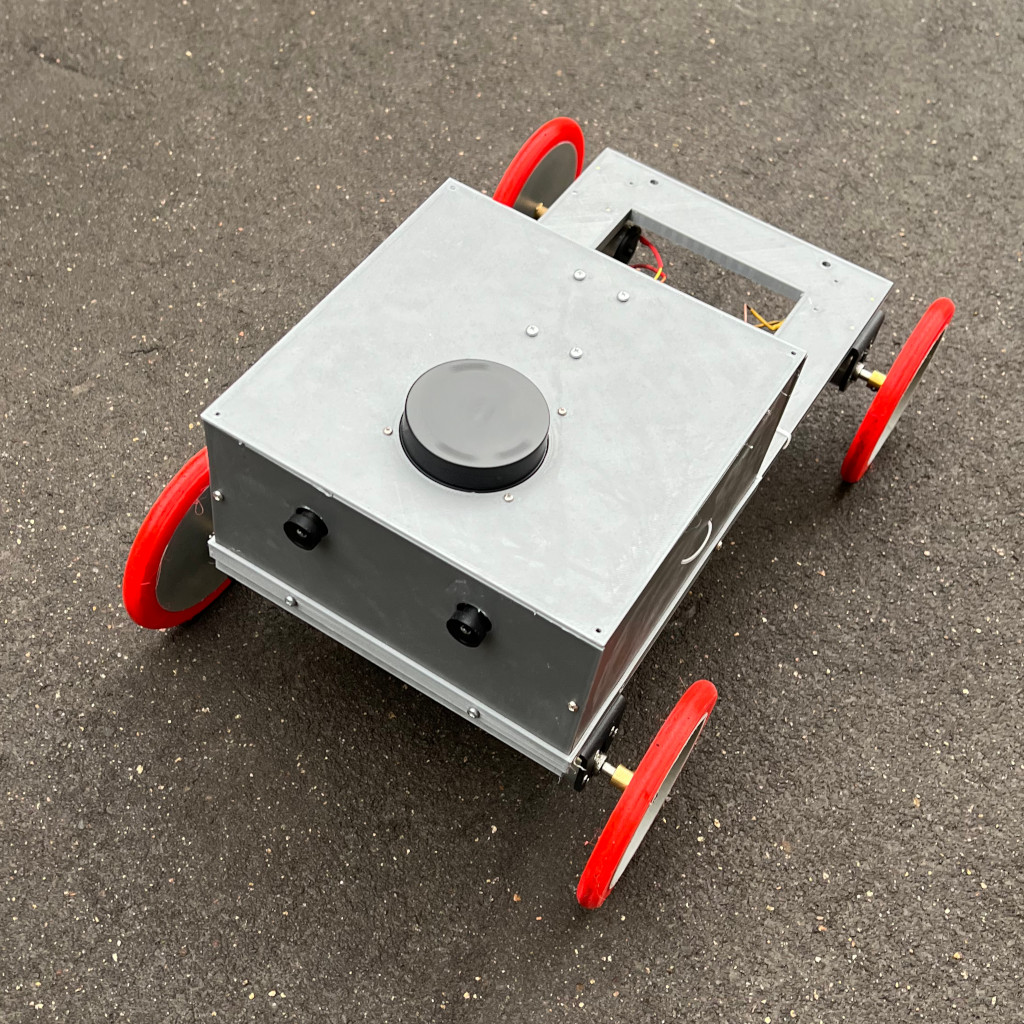

During an interview with a self-driving car company, I was surprised to learn how much delivery robots actually cost. Impressive hardware, sensors and AI-capable SOCs pushed the price well above a couple of thousand dollars. I called BS: I was sure I could do that with cheap sensors, cheap compute, or both.

Another pro was that a delivery robot is also the simplest useful robot I can realistically develop and deploy while operating alone.

2. Early choices and the death spiral of classic control

I’ll address hardware choice considerations and tests in the next posts, but let’s state here for clarity that I’ve ended up using cheapest SOC with neural inference I could find, stereo cams, 2d lidar, some classic IMU. I was planning to get depth / semantic mask of what the cameras see using neural networks and then either control robot with classic control algorithm or neural network policy.

I wrote all the robot code myself in Python because I figured that 1) ROS is too expensive for my use case, 2) I’ll spend more time configuring it than writing the code on my own, 3) after all the dust settles, my code would be a thin wrapper around neural network control policy. More importantly, as a solo developer, managing my motivation and momentum was much more important than technical details, and, honestly, writing some cool code in previously unseen field from scratch is much more fun for me than learning some huge software system with lots of history.

The field tests diagnosis was pretty brutal:

- I can make it work with expensive 3d lidar, but 2d lidar and visual navigation is too hard for classic control algorithms despite numerous tricks to reduce noise and variance. The biggest downside is that you’re always “almost there”, it feels that it’s just one more hyperparam, one technical problem, and you’ll have working algorithm – but that feeling is wrong.

- It would be very hard to debug such algorithms without a simulator. Real world tests are too expensive and algorithms are too hard to tune.

Just to illustrate. When the robot turns suddenly, camera images get blurred, this leads to noisy predictions from neural networks, which leads to robot turning again (it detects some obstacle very close), and the death spiral starts.

3. Writing a simulator from scratch

It was time to introduce a simulator to be able to develop faster.

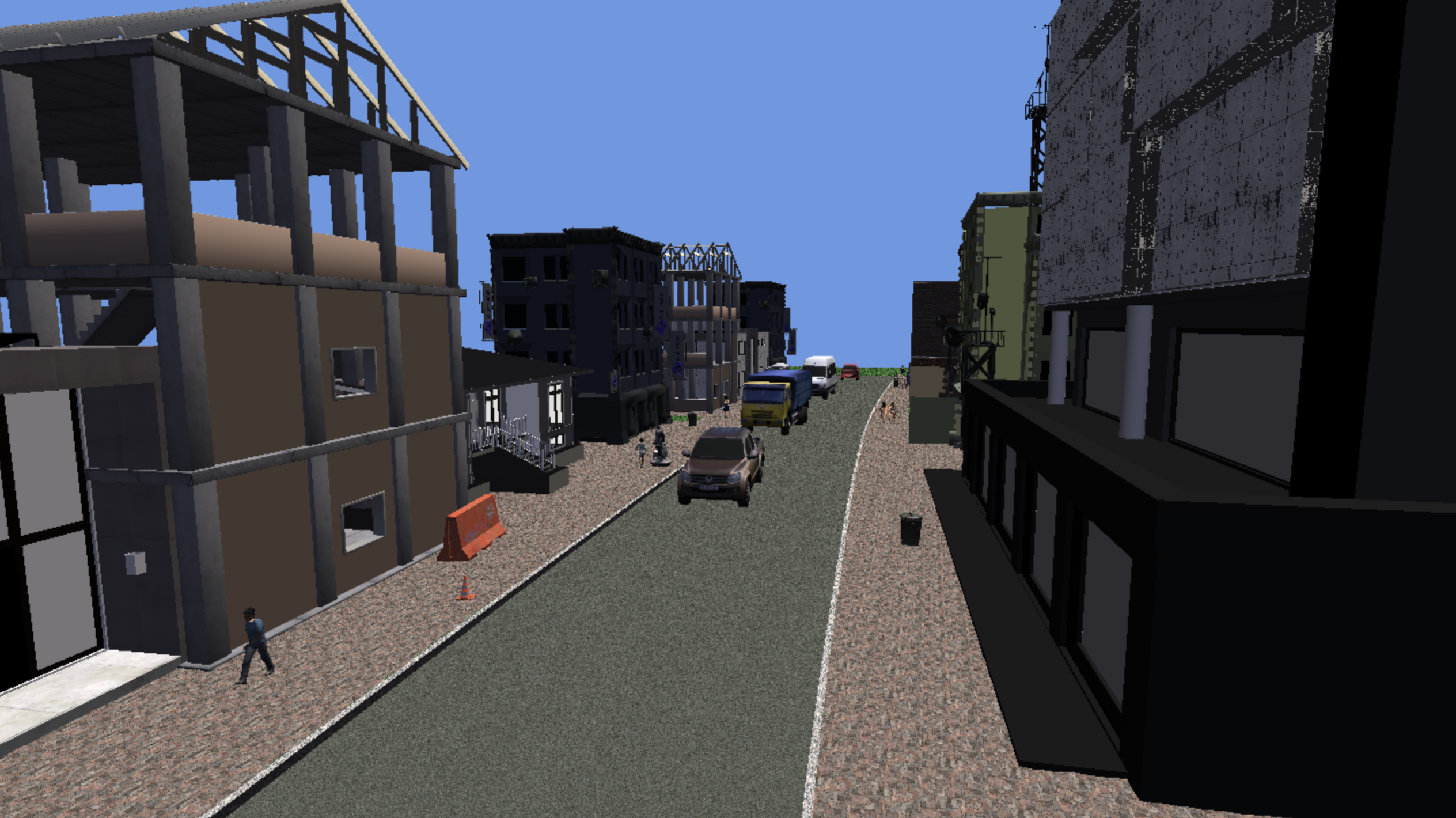

The design incentives were the same, but this time I factored in consumer-grade hardware I have (2x RTX 3080 12GB VRAM), which is below minimal requirements for some commercial-grade simulators and pretty modest needs for my task. I decided to roll out my own 3d simulator for sidewalk delivery robots.

The simulator would generate some sidewalk and road geometry, place pedestrians, parked/moving vehicles, add some buildings, obstacles on sidewalks. I’ll just create virtual robot in my simulated world, model observations and debug my control algorithms.

I started with one of the popular Python 3d libraries, but it felt slow and I faced some age-old OpenGL driver issues. I ended up using a game engine I wrote 20 years ago, porting Direct3D 9 to Vulkan was hard, but fun. My unit test suites sped up 3x.

4. What the policy actually sees

You can see the simulated inputs in the video. That’s:

- left/right camera images

- depth map – estimate of how far point shown on the pixel is from the robot

- semantic map – predicted semantic class of the point (yellow – pedestrian, green – sidewalk, blue – buildings etc.)

- lidar’s signal, i.e. angle+distance pairs

- direction to goal in local coordinate frame (G_l on the picture)

- angular velocity and linear velocity estimates

5. Decalibration and noise

I started working with ground truth values, but would use trained neural networks for depth/semantic prediction tasks to model real-world noise later.

Later I added numerous systematic / random noise generators to match real-world conditions and hardware.

Camera (per-reset, independent left/right)

- Principal point offset

- Focal length mismatch

- Brightness offset

- Contrast scale

Camera Motion Artifacts (joint for both cameras)

- Motion blur from pixel velocity

- Rolling shutter warp

IMU — noise on all channels (heading, gyro, accel)

LiDAR — range noise

Steering

- Torque noise

- Turning deadband

- Stall deadband

6. Training SAC: moving from Pytorch+SB3 to JAX for performance

Understanding that classic control algorithms are a dead end for my case and having working simulator at hand, I implemented training a soft actor-critic RL policy to control the robot.

I have a very expensive environment and need to collect observations asynchronously. Simulators fill up the replay buffer while training samples from it independently. Most libraries tie the two together which is too limiting for my case.

My initial implementation was in PyTorch + SB3 and had to wait if one of the simulator environments needed to reload. At some point I decided to rewrite everything in JAX + RLax — after all, JAX has a vibrant RL ecosystem and it’ll be super easy, right? Not at all.

Turns out none of the JAX libraries fully fit my needs. On the other hand, a SAC implementation based on CleanRL is just a couple hundred lines. Said and done.

Now I have multiple simulators filling up the replay buffer, training samples from it and everything works in full asynchronous mode.

7. Training SAC: tuning hyperparameters

Tuning RL algorithms, especially in the actor-critic world, is pretty hard. In SAC, you have a pretty complex information flow:

- simulators

- replay buffer collects experiences from the simulator

- two critic neural networks are trained to predict how good given action is in given state

- we maintain exponentially moving averages of such networks’ weights

- actor neural network is trained to predict the maximum of the critic in given state (remember, actions are continuous)

- we sample actions from that networks and feed it to the simulator, five hops away from it

Usually, the mistake is in some computation along that road. And errors compound and smear along the whole system.

Here are some bugs I’ve found and fixed in my implementation:

- I was losing “done” flag in some cases when the episode ended – this would derail Q-networks and drive them bananas

- I was sampling action after the simulation step, i.e. action-observation pairs were wrong

Other hyperparameters: reward scale for successful and failed episodes, gamma etc. needed to be tuned just by guesstimating based on tensorboard and basic math.

Worth noting some important variables I monitor in RL training above the usuals:

- mean and std for q- and target networks predictions and their histograms

- residual variance and errors of critics’ predictions

I should add that many of those vars are interdependent, counter-intuitive, and need deep understanding of SAC algorithm, especially the key equations.

8. Puddles, battery fires, and what actually breaks when you go out

Outside world is pretty messy. One moment you’re reattaching wire that disconnected for no apparent reason, then you’re fixing connection issues.

The robot almost drowned in a large puddle of melting snow water. The chassis held up, I disassembled it and was surprised to find no water inside.

The battery holders I ordered had 0.2mm wires — rated for a flashlight, not a robot pulling 5-7 amps. I smelled burning plastic before I saw the smoke. Now I always add a fuse and solder the thickest wires possible.

I learned to fix all the cables thoroughly with hot glue and to always take the laptop with me to fix the code in the wild.

My favorite discovery: the robot consistently veered left during every test. After weeks of blaming my navigation algorithm, it turned out to be a 0.2 volt ground reference offset between the motor driver and the compute board.

But, overall, the policy worked better than I expected.

9. What’s next?

Right now I’m continuing to cross sim-to-real barrier. I run the robot in real conditions and take control when the policy seems to go crazy. Then I tune my policy with DAgger. Wash-rinse-repeat. Will produce a write-up once the results are ready.

As always, feel free to drop me a line if you have questions and I’ll be glad to collaborate or give a talk about my adventures.